Pop Quiz PDX

POP QUIZ PDX: Sassy Ass Trivia About Nike Scandals, More Nike Scandals, and... WOW, So Many Nike Scandals!

The Trash Report

THE TRASH REPORT: On Volcanic Embarrassment and Board Game Movies, With Turkey Vengeance!

More Columns

Mike Norris Out as Portland Thorns Coach in Staff Shakeup

Norris will become the club’s technical director; General Manager Karina LeBlanc promises “global search” for new head coach.

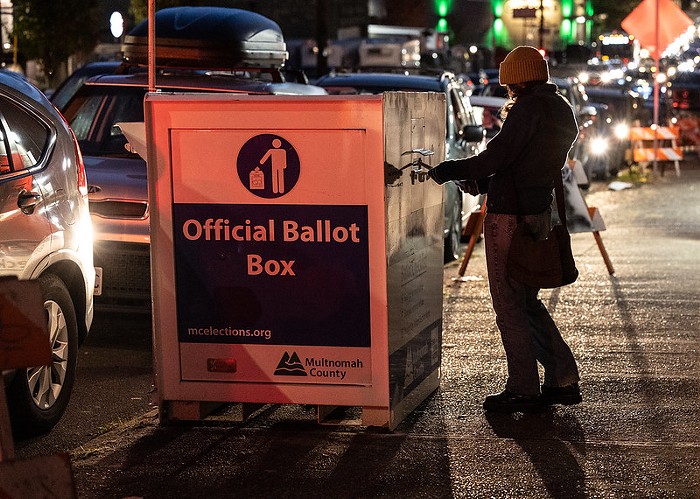

Say Nice Things About Portland 2024 🌹